Thanks all for the interest on this subject! Since December, I have my new version of gossip-observer running and collecting data, so I can reply with some insights informed by preliminary results from that system.

Replying in order:

Makes sense to me ![]() I agree just keeping the sketch up to date is likely best.

I agree just keeping the sketch up to date is likely best.

Related, since we have the blocknumber suffix for the set key of a message, we could indeed skip the GETDATA round trip, since both peers can compute which peer has the newer message for a specific channel (all fields would match, but blocknumber would differ). I think this matches what Rusty suggested upthread.

IIUC basically all of the messages are too big to be used as a sketch element directly? Just due to sigs and keys basically. And I think the use of TLV will make the new messages slightly bigger. Maybe I’m misunderstanding the suggestion here?

Agreed - I’ll start to work in this direction of initial benchmarking. I have an ARM SBC similar to a Pi 4 that I can use for benchmarking Minisketch operations for the set and difference sizes we’re thinking about here.

Mm, not exactly - moreso that some groups of nodes may either have issues propagating their messages, or that they receive new messages with a large delay compared to the average across the network.

After reviewing some implementation code further, I’m more convinced that the P2P network is unlikely to be partitioned. In the worst case, nodes may miss messages via normal gossip flooding, but they can receive messages via ‘catchup’ behavior like the requesting data via timestampfilter messages, or just requesting the full graph. Combined with random rotation of peers, I think that should be sufficient. We should be able to test this hypothesis with gossip graph snapshots, similar to Fabian’s 2nd figure below.

I agree, and I found something similar - for a channel, if one peer is offline, (IIUC) the second peer would still rebroadcast the channel_announcement, and the channel_update for their side of the channel. So this channel would still be known to the network. However, if the channel_update for the other direction of the channel is stale or missing, then a payment (in either direction) would likely fail. In practice, I think implementations ‘prune’ channels missing fee info for one side of the channel from their graph view when performing pathfinding.

I found that many channels are in this state, but I need to refine my methodology there.

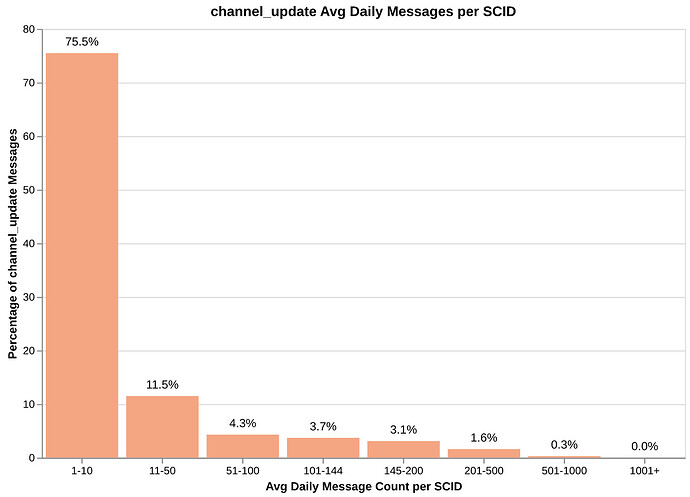

I see the same behavior, at least for channel updates (02/05-02/12):

This is only comparing the ‘outer’ message with its signature and timestamp; I haven’t yet compared the same data for the ‘inner’ fields as you suggested, to see how many messages are just ‘refreshes’ of the same content.

I meant positions in the sense of detected communities. Rephrased: “Does a node’s position in the payment channel network affect its view of the network state, which is constructed from the gossip messages it receives over time?”

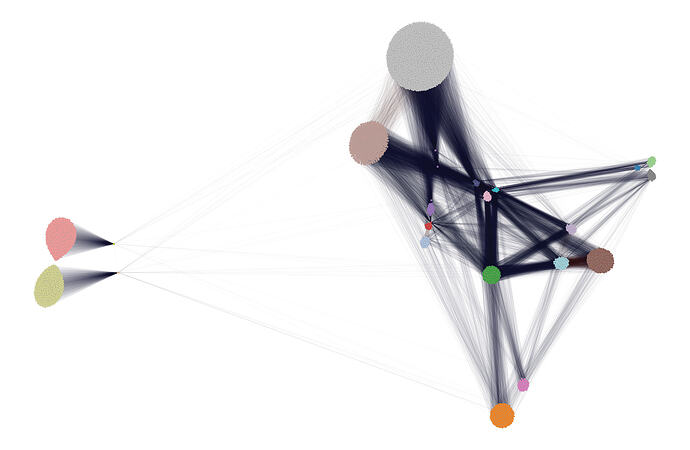

I used stochastic block modelling with the edge capacities as an extra parameter to perform community detection, and got this result:

I’ll dive into this more on a deep-dive post on the BNOC forum later this week, but the tl;dr is that I found 21 communities, and 9 communities-of-communities.

A massive portion of the node count is attributable to this entity, Lightning Network Token. They sell preconfigured nodes that seem to open a very small public channel to their hub node. This is the 4 communities on the far left; a total of ~2000 nodes.

The grey community is mostly Tor-only nodes that only have 1-3 channels to the network ‘core’, specifically nodes that accept low-capacity channels such as 1ML or CoinGate. That’s ~5600 nodes.

For these communities, since they have very few payment channels, and with the assumption that massive hub nodes like 1ML aren’t actually forwarding all gossip traffic to all channel peers (since it’s a lot of traffic and system load), those nodes may lag behind in their network view. With random gossip peer selection, the odds of the selected peer having very few payment channels is high.

Gossip-observer has one LDK node connected to each community shown here, and I’m collecting snapshots of the gossip graph of each node every few hours. So from that I should be able to validate this hypothesis.

The notes on your methodology were very useful, thanks again for that! I should be able to perform similar deduplication for my collected data and cross-check with your results. I also don’t have an explanation for why there is a peak around 0 / some messages not being widely received. But we may be able to get some hints from the message contents.

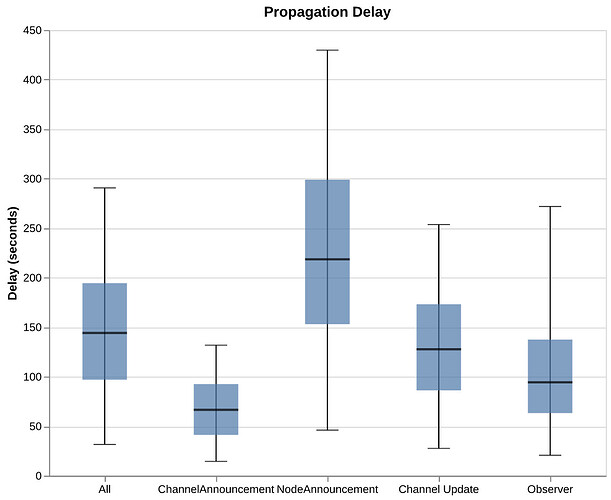

Good to see you on this thread! My results for propagation delay are quite similar, so that’s good ![]()

This was for 2026-02-05 to 2026-02-12. I haven’t re-run this on the data collected since the 12th.

The box plots are for P05, P25, P50, P75, P95. I was puzzled by the large difference in P50 across message types, but I think LND actually processes announcements before updates, so they may be forwarded with different delays as well.

The rightmost plot, ‘Observer’, is for messages sent from the gossip-observer nodes. I opened 7 channels, and I’m sending random channel updates on an interval for each to generate new messages. I think the lower P50 is just due to knowing the time of message creation, vs. the expected one-hop propagation delay of a gossip message of 30 seconds (from the 60 second batching timer).

Good to see high-ish overlap here! I think a good next question is to dig into that non-overlapping data and see if it’s always for the same parts of the graph, message types, message contents, etc. I’ll try to do the same.

It would be interesting to see your stats on messages I’m generating / that we have a known origin location and time for - as I’m running a custom version of LDK node, I’m not sure how other implementations, with stock settings, may be seeing my channels.

I’ll think more about interesting queries as well - I’m still refining that myself tbh.

P.S.Thank you to emzy and SchroedingersCat for accepting my low-value channel open requests. Hat-tip to Megalithic for running a routing node specifically for smaller-value channels.